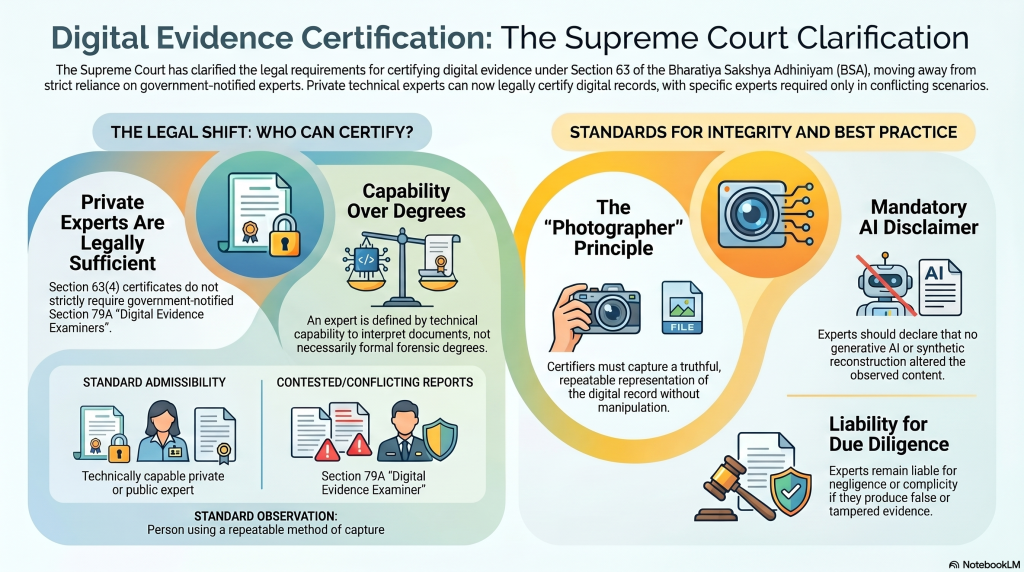

The Supreme Court has provided a welcome clarification on the wrong interpretation of the Pune Bar Association of Section 63 of ITA 2000. The Bar Association had contended that the section required every Section 63 certificate to be required to be signed by an “Expert” under Section 79A of the Act and therefore was unconstitutional.

Naavi who is a pioneer in this field had rightly interpreted the erstwhile Section 65B of Indian Evidence Act and rightly interpreted that the Certificate either should contain the print out of the electronic document certified as part of the certificate as a visual object being certified or contain the hash value in case the evidence is an audio or a video. Some of the certificates issued by Cyber Evidence Archival Center (CEAC) were based on this principle.

The fact that such certificate issued by a private person like the undersigned was acceptable was first stated in the Suhas Katti Vs State of Tamil Nadu by the trial court and upheld by the session Court.

Subsequently in ITAA 2008, Section 79A was introduced and the role of “Digital Examiner of Evidence” was introduced . However we had interpreted this only as mandatory when the Court had to interpret two contradictory Section 65B reports. At the time of admissibility a certificate from a private person was considered sufficient and could be countered by another Section 65B certificate as a counter by another private expert.

When the Section 63 of BSA replaced Section 65B of IEA, the Government had introduced a confusion through ambiguous drafting.

Some interpreted that the Part B of Section 63 certificate had to be filled up by an Expert under Section 79A of the Act. This had also been supported by a Madras High Court judgement.

Now the Supreme Court bench consisting of CJI Surya Kant and Justice Joymala Bagchi has rightly interpreted that Section 63(4) certificate can be provided by “Experts” who may not be those certified under Section 79A of the ITA 2000 as “Digital Evidence Examiners”.

Naavi’s Interpretation which is consistent with the judgement is that an “Expert” referred to under Section 63(4) should be a person who is technically capable of interpreting the digital document being certified. It is not necessary that he should be a “Cyber Forensic Expert” as some may interpret and that such “Cyber Forensic Expertise” comes from a “University Degree” or similar formal qualification.

A Digital Document seen on a digital instrument using a specific method of viewing and captured in a manner that represents a truthful representation of the visual is like a photographer who captures an image using a camera and submits the output without manipulating the digital copy. The Certificate confirms the method of capture in such a way that any other person with reasonable expertise can repeat the process and should obtain a similar result subject to the document having not been altered subsequent to the certification.

Where disputes arise are instances where an expert has certified a document which is modified by the person in charge or re-created using a different method (say a different browser, using different filters etc., which could alter the rendition) and there after certified by another expert in good faith. In such a case two versions of the same document may exist both being certified by experts in good faith but carrying different versions. In such cases the Courts may have to call in a Section 79A expert to satisfy itself which version has to be relied upon for the specific context.

If any of the Certifying expert has not acted with due diligence, it is his negligence which can even be argued as complicity to produce false evidence. If he has acted in good faith, there may be no error on his part and whoever had altered the document could be charged of tampering with evidence.

One specific disclaimer which Naavi advises certifiers to use now is to declare that “The process used to capture the document did not use technology such as AI which could affect the integrity of observation”.

A probable rendition of the disclaimer may state,

“To the best of my knowledge, the process adopted for capture, preservation and certification of the electronic record did not employ any generative AI, synthetic reconstruction, automated enhancement, interpolation or similar technology that could materially alter the observed content”

We welcome the Supreme Court verdict which clarifies the position.

(Watch out for a more detailed post in due course)

Naavi

Also Refer:

Section 63..Naavi’s Perspective

NotebookLM overview: Video : Audio-English : Audio-Kannada : Audio-Tamil : Audio-Hindi