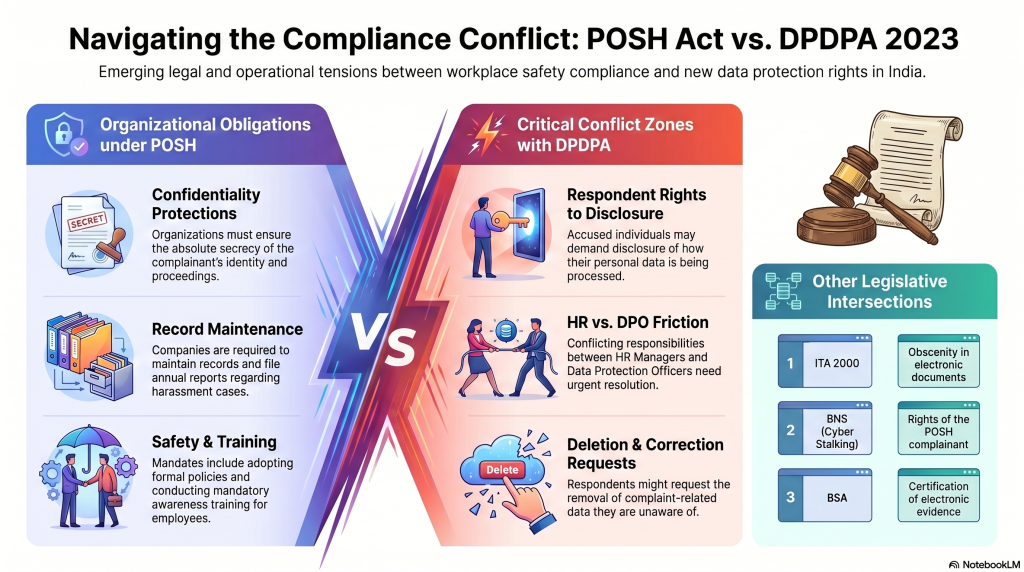

The potential conflict between the implementation of the Digital Personal Data Protection Act, 2023 (DPDPA 2023) and the Right to Information Act has already been recognized and is currently under consideration before the Supreme Court. However, another important area of possible regulatory overlap appears to have escaped the attention of both industry and compliance professionals—the interaction between the POSH Act and DPDPA 2023.

The Sexual Harassment of Women at Workplace (Prevention, Prohibition and Redressal) Act, 2013 (POSH Act) applies to both public and private sector organizations and imposes several statutory obligations on employers. These include:

- Constituting an Internal Committee (IC) comprising a senior woman employee as Presiding Officer, two employee members, and an external member.

- Providing a safe working environment for women employees.

- Formulating and communicating a POSH policy.

- Conducting awareness and training programs.

- Providing administrative support to the Internal Committee.

- Maintaining confidentiality of complainants and proceedings.

- Providing support and protection to complainants.

- Maintaining records and filing statutory reports.

- Treating sexual harassment as misconduct and imposing appropriate disciplinary action.

While these obligations are well understood from an employment law perspective, the implications under DPDPA 2023 have not yet been adequately examined.

Emerging Areas of Conflict

Several provisions of DPDPA 2023 may create practical challenges when applied to POSH-related investigations and records.

1. Right of the Respondent to Information

DPDPA grants Data Principals the right to know how their personal data is being processed. A respondent in a POSH proceeding may therefore seek details regarding the collection, use, storage, and disclosure of information relating to the complaint.

How should an organization balance such requests with the confidentiality obligations imposed by the POSH Act?

2. Requests for Correction or Erasure

A respondent may exercise rights under DPDPA to seek correction, completion, updating, or erasure of personal data maintained by the organization.

However, records relating to a POSH complaint may need to be preserved for statutory, evidentiary, disciplinary, or appellate purposes. In some situations, the respondent may not even be aware that certain information is being retained as part of an ongoing or concluded POSH process.

Can such requests be denied? If so, under what legal justification?

3. Grievance Redressal Rights

DPDPA requires organizations to establish grievance redressal mechanisms for Data Principals.

If a respondent disputes the handling of his personal data in connection with a POSH investigation, should the matter be addressed through the DPDPA grievance process, the POSH process, or both? The possibility of parallel proceedings cannot be ignored.

4. Rights of Nominees

DPDPA introduces the concept of nomination, under which certain rights may devolve upon a nominee in specified circumstances.

The implications of such rights in relation to sensitive POSH records require careful examination. The confidentiality framework under the POSH Act was never designed with such a concept in mind.

Additional Legal Dimensions

The complexity does not end with DPDPA.

Other laws may also influence the rights and obligations of parties involved in a POSH complaint, including:

- The Information Technology Act, 2000, particularly provisions relating to obscene electronic content.

- Relevant provisions of the Bharatiya Nyaya Sanhita (BNS), including offences such as cyber-stalking and electronic harassment.

- The Bharatiya Sakshya Adhiniyam (BSA), particularly provisions relating to admissibility and certification of electronic evidence.

- Employment and disciplinary laws governing workplace misconduct.

Consequently, a single POSH complaint today may involve a complex interplay of privacy rights, evidentiary requirements, employment obligations, criminal law considerations, and data protection principles.

The Need for a Harmonized Approach

Organizations can no longer treat POSH compliance and DPDPA compliance as independent silos. The preservation of confidentiality under POSH may appear to conflict with transparency obligations under DPDPA. Similarly, data subject rights under DPDPA may collide with statutory record-retention requirements under POSH.

Unless these issues are examined and harmonized, organizations may find themselves caught between competing legal obligations. In practical terms, this could create tensions between the Human Resources function, which is responsible for POSH compliance, and the Data Protection Officer, who is responsible for DPDPA compliance.

The challenge before compliance professionals is therefore not merely to comply with both laws independently, but to develop governance frameworks that enable both statutes to operate without undermining each other.

The issue deserves serious examination before the first major dispute brings these conflicts into sharp focus.

(P.S: Consequent to this chain of thought, the DGPSI-HR framework may be extended with implementation specifications that addresses conflict with POSH Act, ITA 2000 and RTI Act in the next version.)

Naavi