While many are rejoicing the success of Chat GPT 3 and waiting for the Google’s Bard to come up with a more efficient NLP system, there is an underlying fear that the growth of AGI and ASI may soon pass the critical stage and start creating rogue and malicious AI programs.

We can soon expect many variants of ChatGPT to surface with many ChatBots on different websites all trying to proclaim that they are “AI Powered”.

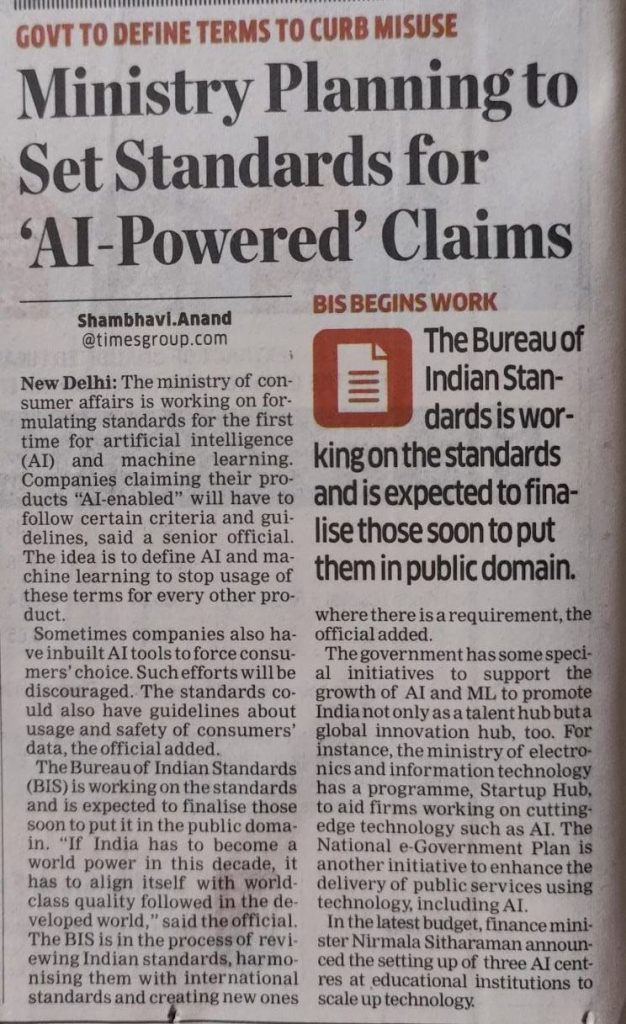

The Indian Government has taken the first step where the Ministry of Consumer Affairs is mandatin g that companies who want to project their projects or services as “AI Enabled” will be subjected to certain guidelines.

g that companies who want to project their projects or services as “AI Enabled” will be subjected to certain guidelines.

One concern would be that the “AI tag” could be used to mislead the public and hence the Ministry of Consumer Affairs may bring out some “Disclosure Standards” for claiming “AI empowered” tag.

The accompanying news report suggests that Bureau of Indian Standards is working on standards and will put them in public domain.

Just as Google was caught unprepared with the release of ChatGPT by Open AI, MeitY has been caught off-guard with the announcement that the Ministry of Consumer affairs will come out with an AI standard.

In a way, MeitY should be concerned that in an area where they should have taken a lead, another department has started acting before them.

While we need to appreciate the Ministry of Consumer Affairs and BIS for the initiative, it is necessary for MeitY to also join them and work in collaboration to develop a standard which is sound.

The definition of “AI” may be wide and encompass a simple script that automates some activity to IoTs and robots working in deep learning domain and fixing some standards for disclosure for Consumer awareness would be tough.

It is possible that there will also be many of the small time players providing ChatBots which provide incorrect responses. Some may be hacked and taken over by malicious characters which will cheat the consumers with the “AI Empowered Certification”.

The Ministry of Consumer Affairs will not be able to make a proper assessment of the AI activity since it requires deep understanding of the technology.

However, one aspect that we have been asking for as the first regulatory principle namely “Registration of AI development companies” and “Code stamping of the Registration ID” can be done by the BIS registration.

While incorporation of other ethics of AI may take some time, I advocate that we adopt the known laws to cover the AI regulation at least as an immediate measure.

The Suggested Solution

The solution I suggest is to consider AI products as the responsibility of its owners just as we make parents and guardians responsible for the acts of the Minors.

The transport department has already made rules that if vehicles are driven by minor children the parents will be fined.

We can adopt the same principle here and introduce penalties for

a) Not registering an AI development (applicable to developers)

b) Not registering the use of AI in products (Which BIS may be thinking now)

c) Making the owner of AI liable for any adverse consequence of an AI algorithm even if they are registered (So that Registration does not become a certification of assurance of the functional quality)

This law can be brought in without any new law just by a notification of an explanation under Section 11 of Information Technology Act 2000,

This section already states

Attribution of Electronic Records

An electronic record shall be attributed to the originator

(a)if it was sent by the originator himself;

(b)by a person who had the authority to act on behalf of the originator in respect of that electronic record; or

(c)by an information system programmed by or on behalf of the originator to operate automatically

This automatically means that an output of an AI is attributed to the owner of the AI program. Hence if the output is faulty, malicious or damaging the responsibility falls on the owner of the algorithm. The laws such as IPC can be invoked where necessary.

The owner of the AI algorithm initially is the developer and subsequently the liability should be transferred to the user though the ownership for other reasons of licensing or IPR may remain with the developer.

Hence an explanation can be added to this section to mean the following:

Explanation:

Where the information system is programmed by one person and used by another person, the legal liability arising out of the functioning of the AI algorithm shall be borne by the user.

Where the user is the absolute owner of the algorithm the transfer contract shall include disclosure of the functionalities, the default configurations and the code.

Where the user is only a licensee, the license agreement shall disclose the licensor and the default configuration that affects the functional impact on the consumers.

If the developer does not disclose the required information, he shall be considered as liable for the acts of the AI algorithm.

This suggestion is some what similar to the concept of “Informed Consent” being obtained where the Data Controller discloses the details of processing and data processors to the data subject in a data protection law. The requirement would be a reverse of this consent mechanism where the transferor of the license rights provides an “Informed Disclosure” which the transferee shall further disclose to the consumers.

Since this suggestion does not need any change of law, it can be implemented immediately even before BIS comes up with its recommendations and our own UNESCO recommendation based AI law can be formulated.

Naavi

(Comments welcome)