As the world is trying to develop regulations for Artificial Intelligence and prevent Privacy Abuse, Copyright Abuse, irrational and unexplainable decisions etc., a question arises what exactly is the definition of “Artificial Intelligence”, when does a “Software” become “Artificial Intelligence” and whether the known principles of Behavioural Science can be applied to Artificial Intelligence behaviour also.

A software is a set of instructions that can be read by a device and converted into actionable instructions to peripherals. The software code is created by a human and fed into the system from time to time as “Updations and new Versions”. Each such modification is dictated by the developer’s learnings of the behaviour of the software vis-a-vis the expected utility of the software. In this scenario, the legal issues of the status of the software and the software developer is settled. Software is a tool developed by the developer for the benefit of the user. The user takes control of the software through a purchase or license contract and as the owner of the tool, is responsible for the consequences of its use. Hence when an automated decision creates harm to a person (User or any third party), the owner (Licensee or the developer) should bear the responsibility. This is clearly laid down in Indian law through Section 11 of ITA 2000.

Despite this, we are today discussing the legal consequences of the use of AI, whether its actions need to be regulated through a separate law and if so how?.

We are discussing the copyright issues when AI generates some literary work or even the software. In US, Courts have held that when an idea is generated by an AI, it is not copyrightable.. (Thaler Vs Perlmutter). Even in India, music created by AI is not held copyrightable (Gramophone Company of India Ltd. v. Super Cassettes Industries Ltd. (2011)). Recently videos created by AI of deceased singer SPB has created a property rights issue on who owns the AI version of SPB.

Copyright or other Intellectual property laws are powerful international laws that are protected by treaties and hence are likely to prevail over other new generation legal issues raised by technology. The upholding of the concept that “Creativity” cannot be recognized in AI also to some extent destroys the argument that AI is a “Juridical entity” different from the “Software” which is accountable in the name of the developer.

The EU act adopts the definition of AI as

“a machine-based system that, for explicit or implicit objectives, infers, from the input it receives, how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments“

Earlier definitions used terms like “Computer Systems that can perform human like tasks” such as “Seeing”, “hearing”, “Touching” etc and convert them into recordable experiences.

In the current status of the industry, AI has developed into Generative AI algorithms and humanoid robots. In such use cases, the definition of AI is touching the concept of “Intuition”, “restraint”, “Discretion” etc which are attributable to a human intellect.

For example, human does not react the same way for every similar stimuli. Some times humans get angry and is not able to show discretion in action. Some times, they do.

What is it to be Human Like in terms of behaviour?

“Software” and “Artificial Intelligence” are not two binary positions and there is no clear line of demarcation. However to be clear about the legal position of AI, it is necessary to have an understanding of what exactly is Artificial Intelligence and whether there is a proper legal definition when a “Software” becomes “Artificial Intelligence”. An AI algorithm normally is not able to show such discretion.

Human can “Forget” and “Move On”. A Computer is not able to “Forget” and hence every action of it is a reflection of its previous learning. Even if we build a model where the behaviour of AI changes statistically with each new experience, the human behaviour has an element of spontaneity that an AI misses.

Thus a software which is coded to change its future output based on the statistical analysis of new inputs by modifying its own code created by a “original coder” of the Version Zero, is knocking at the doors of being called “Automated Decision making system with self learning ability”. This is often called Artificial Intelligence based on Machine Learning technology.

The inputs to such system may come from sensors of camera or mike etc but are interpreted by the software that converts the binary inputs into some other form of sight and sound.

This process is similar to the human brain system which also receives inputs from its sensory organs and processes it in the brain some times with reference to the earlier recorded experiences (Which we may call as prejudice).

But the difference between human intelligence and Artificial intelligence is that all human responses are not same. It varies on several known and unknown factors. If we try to remove this characteristic of human behaviour, we will be “De humanizing” the decisions in the society and convert the society into an artificial society.

The objective of any law is to preserve the good qualities of the society and one such good quality is the unpredictability of human mind. The “Creativity” aspect of the software which often comes into discussion in IPR cases arise out of this need to prevent human character. We donot want all humans to be zombies. One impact of this can be seen in the Computer Games. If we are playing Tennis or Cricket or Golf on the computer, we know that a certain type of action on the key board results in a certain type of swing of the bat on the screen while in a real situation, a Sports person has many innovative ways of dealing with the same ball. It does not seem ideal that we remove this creativity and the beauty of uncertainty and make every short ball go for sixes while in reality many rank bad balls result in wickets.

In Generative AI, we have often seen a “Rogue” behaviour of the algorithm where it behaves “Mischievously” or “Creatively”. Whether this rogue behaviour itself is “Creativity” and is an indication that the “Software” has become “Human” because it can make mistakes is a point to ponder.

The thought that emerges out of this discussion is that as long as a software is bound to a predictive nature of behaviour, it remains a software. But when the software is capable of behaving in an unpredictable manner, it is not becoming “Sentient” but actually becoming “Human”.

A dilemma arises here. “To Err is Human” and hence one view is that unless a Computer learns to err, it cannot be called “Human like”.

But if AI is allowed to “Err” then it will be losing the benefit of being a “Computer” where 2+2 is always 4. It is only the human mind that thinks why 2+2 cannot be always 4.

“To Err” and “To Forget”, “To show discretion”, “To do things in a way it has never been done before” are human characters which today’s so called Artificial intelligence algorithms may not be exhibiting. Until such a situation arises, AI of today remains only a software and has to be treated as “Software” in terms of legal implications with responsibility for actions determined by the software development and license terms.

The Future

In the future, when a software is capable of behaving like a human with an ability to “Feel” and “Alter its behaviour based on the feeling” etc., we should consider that the software has become an AI in the real sense. The new laws of AI will then be applicable only when the software reaches the maturity level of a human.

In the case of laws applicable to humans, we have one set of laws applicable to a “Minor” and another set of laws applicable to an “Adult”. It is expected that a human becomes capable of taking independent decisions just after a certain age is attained. Though there is a serious flaw in this argument that at the stroke of midnight on a particular day, a person becomes an adult and we also donot measure the age from the time of birth along with the time zone. We are living with this imperfect law all along.

Now when we are considering the transition of a software to an AI, we need to consider if we introduce a more reliable measure of whether the software can be considered as AI for which a criteria has to be developed along with a system of testing and certifying.

In other words, all software remains a “Software” unless “Certified as AI”. As long as a software remains a software, the responsibility of the software remains with the original developer/owner or the licensee. This is like a “Birth Certificate” in the case of a human being. The birth of an individual does not go on record until it is registered and certified. Similarly a software does not become eligible to be called “AI” unless it is registered and certified.

The “Certification” that a software is an “AI” has to be provided by a regulatory agency based on certain criteria. The argument that we put forth is that the criteria has to take into account the ability of an AI to err, to forget, to show restraint, to be innovative etc.

Can we develop such character mapping of an AI? and a new thought of “AI Transaction Analysis”.

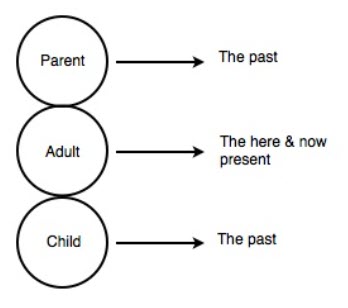

Dr Eric Berne postulated that a human appears to behave from three ego-states namely the Parent, Adult and Child.

The accompanying diagram shows the typical description of the three ego states, PAC or Parent Adult, Child ego states.

The “Parent” Ego state in the context of an AI is the reflection of the “GIGO” principle that there are set instructions and the output is based on the input.

The “Adult” ego state in the context of AI is the reflection of unsupervised learning ability of an AI. It is a logical response to an input stimuli.

The “Child” Ego state is the creative and unpredictable nature of the humans.

Eric Berne and other researchers who followed him further divided the ego states. For example the Child ego state was sub divided into “Compliant” (Adapted child) and “rebellious” (Natural Child) and “Parent Ego state” was also divided into “Nurturing Parent” and “Critical Parent”.

It is time that we apply these principles to identifying the maturity status of AI, identify and certify the status of the AI. I call upon Behaviour scientists to come in and start contributing towards flagging a software as an AI by applying the PAC principle to AI.

For mapping PAC of an individual behaviour scientists have developed many tests. Similarly we need to design tests for AI categorization. I have experimented such scenario based tests during my stint as a faculty in the Bank in the early 1980s. But practitioners of behaviour science has many advanced tests to map the PAC state of a human and it can be applied to test and certify an AI also.

We need to think if AI regulation needs to take into account such classification of AI into its ego-states instead of the classification that has been adopted in the EU act.

Open for debate…

(OPEN FOR DEBATE)

Naavi

Also Refer: