In recent days the menace of Digital Arrest related scams have assumed alarming proportions. There are instances of people losing crores of Rupees to this scam and some of these matters are coming for discussion in Courts.

Naavi has been a long time follower of “hypnotism” and has attributed some of thee otherwise illogical behaviour of Cyber crime victims such as the children who succumbed to the Blue Whale game and also held out some analysis in the case of old people succumbing to Phishing frauds. (Refer here).

A time has come to once again look back on the science of Hypnosis and understand whether there is an instance of “Cyber Hypnosis” that can explain some of the irrational behaviour of victims of Digital Arrest.

The aged persons living alone are psychologically vulnerable for friendly suggestions even if it is from strangers. People with Dissociative identity disorder, People who have a history of childhood abuse or other trauma, could be more vulnerable than others to fall prey to cyber hypnosis.

Hypnosis as a traditional theory suggests that a human brain has a sub conscious part which the hypnotist awakens and establishes contact, putting part of the conscious part including the rational part to sleep. As a result the hypnotist is able to give suggestions that the subject finds it difficult to ignore and he becomes a puppet doing what is suggested.

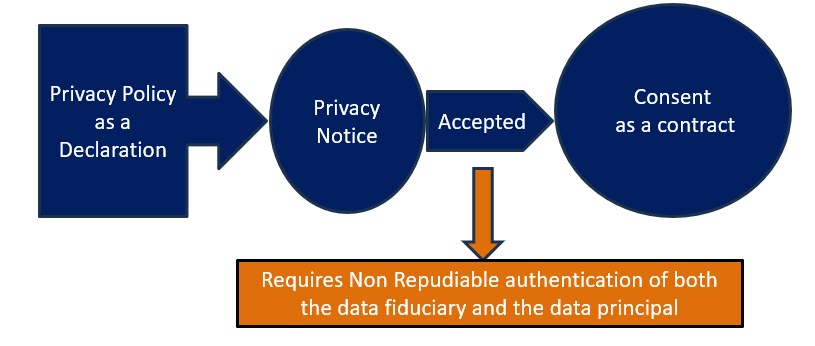

Under this state of “Trance”, the ability of the individual to take rational decisions is side lined and therefore any contractual commitments done during the time are invalid. It is like the “Persons in intoxicated state of mind” or “Occasionally insane” , being held not fulfilling the conditions of a “Free Consent” for a contract.

The fact that two Banks recently were capable of identifying this state of the customer and talk him out of the fraud is an indication that the state can be identified by an alert bystander. It is like a person in the hypnotic trance exhibiting a blank vision which looks out of ordianry.

It is therefore necessary for law to take into account that contracts undertaken under this trance is not a valid contrct.

It is a fact that in digital transactions the Bank which executes the instructions of this customer may not find it easy to identify the abnormality of the situation but if the amount involved is large and not commensurate with the usual habit of the customer, the requirement of “Adaptive Authentication” mandated by RBI requires the Banker to identify the transaction as requiring some caution. Otherwise it should be considered as “Negligence”.

There is no doubt that the Banker on the side of the fraudster is directly involved in the fraud as the Banker of the fraudster with apparent failure of KYC. The Banker at the customer’s end being part of the Banking Chain cannot fully absolve himself of the responsibility for money laundering in this type of fraud.

Since the privity of the contract between the victim is with the Bank at his end, it is natural that the relief to the customer should come from him. Later this Banker can recover the money from the banker at the fraudster’s end. This is an extension of the “Contributory” negligence and “Intermediary responsibility” that the Banks should be held liable for.

This should be the jurisprudence in matters related to Digital Arrest and I hope Courts take cognizance of this menace of “Cyber Hypnotism” and provide appropriate relief to the victims.

Naavi