At present date, Quantum Computing stands towards traditional computing like a horse did towards the Wright Brothers’ plane. The horse was much faster, but the plane could move in a tridimensional space. And we all know how the horse and the plane evolved since then, now don’t we?

Geordie Rose founder of D-Wave, 2015

To address this topic and then to place it within a context of potential leverage towards themes such as Artificial Intelligence, Secure Corporate Communications, Competitive Edge towards the marketplace as well as others … it is mandatory to start by clearly defining WHAT computing is and WHERE does Quantum Computing stand out.

So, Computing as we know it

A computer is a device that manipulates data by performing logical operations, hence computing is that precise “manipulation” action which allows data to combine and translate into added value information.

The software is the set of instructions that convey what needs to be done with the data, while the hardware is the set of electronic and mechanical components over which the data operations take place according to the provided instructions.

While the core of our universe is the “subatomic world”, meaning the Quantum particles that make all the atoms’ basic components (Protons, Neutrons, and Electrons) the core of computing (as we, humans, have developed it) consists of two logical statuses, On and Off (1/ 0) and its “base element” is called the “bit”.

So, it is a binary system where the basic components (the bits) can univocally present a status of either “1” or “0”.

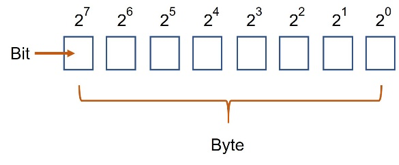

Mathematically, the human being has grouped this component in clusters of 8, called “bytes” and the logic behind those bytes is that from the bit to the far right towards the bit to the far left (of the 8), each would represent a base 2 exponential figure, meaning:

- the bit further to the right is 2 elevated to 0, therefore representing number 1

- the following to the left is 2 elevated to 1, therefore representing number 2

- the one farthest to the right will be the 2 elevated to 7, therefore representing 64

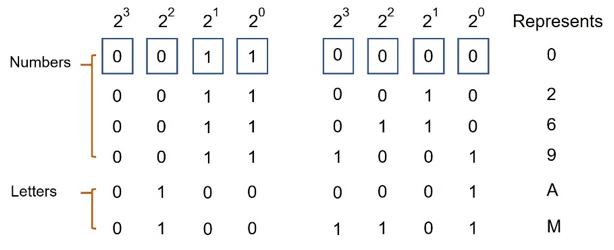

Now, the core of our “modern” computers started by splitting the Byte into two segments of 4 bits each, from left to right the first 4 would represent a number under the form of a base 2 power, while the other 4 bits would provide the information about which type of data was to the right: a number, a letter an instruction, other. This was called the ASCII table.

The evolution of computing led this initial context to grow both in terms of numbers of bits applied to deal with the information, as well as the speed at which those operations would take place.

From 8 bits in the mid-1990s we moved to 16, 32, 64 and so on while the speed raised from some megahertz to 1 gigahertz, then 2, 4 and it keeps evolving.

In 1965, Gordon Moore the co-founder of Fairchild Semiconductor and Intel, predicted (based on observation), that the number of transistors in a dense integrated circuit would double every two years for the following decade, therefore so would the computing capacity. In fact, the rate has been observed now for several decades, and that constitutes Moore’s Law.

Quantum Computing

Quantum computers are similar to “traditional” ones in the sense that they also use a binary system to characterize data, the difference lies in the fact that Quantum computers use one particular characteristic of subatomic particles (in specific the electrons), called the “Spin” to account for the status “0” or “1”.

The Spin is a rotational/vibration characteristic of subatomic particles that is “manageable” since it responds to magnetic fields, therefore, and in very, very simple wording, while in “traditional computers, humans control the bit status by applying or not power to a given bit; in Quantum Computers, we can affect the Status “Spin-up” which corresponds to “1” or “Spin Down” which corresponds to “0” by applying either variation to a magnetic field or a microwave focused pulse.

And what a difference this makes!

Once we move beyond the atomic world and start manipulating electrons one by one, very strange things take place.

Note: electrons are the particle of choice by two orders of reason, they are the “easiest” to extract from an atom and they behave and become photons once extracted, therefore, being able to transport information over distance as light wave particles.

Subatomic particles behave both as matter and waves, bearing the extraordinary characteristic of being able to represent both Spin-up and Spin Down status at the same given point in time.

Not to spend a couple of thousands of words describing in detail how this is possible and all the multidimensional implications that it represents (parallel universes and so on …), I will just advise you to take a look at Professor Richard Feynman lectures about Quantum Physics.

Now due to this specific characteristic of Quantum Computers (the Quantum particles), this is the point where any similarity between “traditional” computers and Quantum Computers ends.

Making the picture crystal clear, in a “traditional” computer to test all possible combinations within one set of just 4 bits so the one that applies to a given circumstance may be found, the machine goes about each of the following combinations one at a time.

Taking 16 different operations.

Now, since the Quantum computer’s bits (called Qubits) bear the capacity to represent both statuses at the same time, this process would merely require one single operation on a 4 Qubit Quantum computer!

If instead of “half a byte” (4 bits, like represented above), we speak of the latest generation software that deals with 128 bits, guess what? Analyzing all possible combinations amongst those 128 bits would require exactly one single operation on a 128 Qubit Quantum Computer!

I think that, by now, you are starting to get a picture of the involved potential, still let me give you a “hand” here; a 512 Qubit Quantum Computer would be able to analyze more data in one single operation than all the atoms that exist in the Universe.

And Quantum computing has a “Moore’s law” of its own, instead of the momentum being of doubling the processing capacity each two years, each new generation has proven to be 500 thousand times more powerful than the preceding one.

Going back to the analogy between the horse and the Wright Brothers’ plane, it’s like if they had given birth to the Lockheed SR 71 A Black Bird plane, which can fly at a speed of almost 2,200 miles per hour… now imagine what will happen a couple of generations into the future…

Constraints

Here are some constraints towards the establishment of real to the letter Quantum Computers:

- The environment

As previously mentioned, the phenomena that allow Quantum computing to be such a powerful tool resides in the ability of subatomic particles to simultaneously represent several states; in Physics, this is called “superposition”.

Now, opposite let’s say to Quartz, which is used in modern day clocks because its molecules present a constant vibratory rate that allows high precision at a wide range of environmental conditions from pressure to temperature, humidity, luminosity and so on …, superposition only happens if no external factors are “exciting” the subatomic particles, meaning the subatomic particles only behave like that before having been exposed to any external factor.

It would be enough to have a Quantum Computer Chip hit by sun light to render it inefficient.

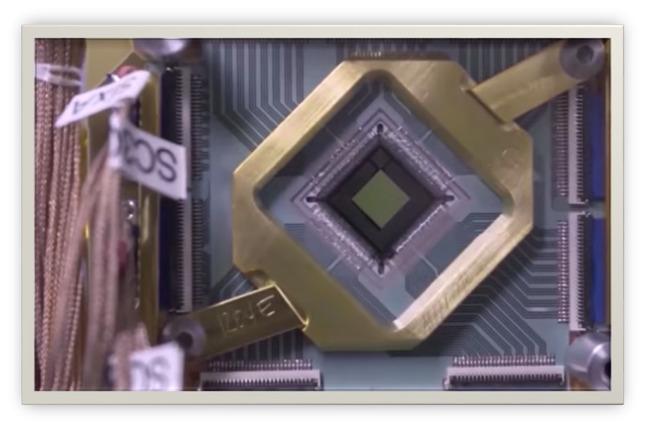

Therefore, a Quantum Computer is basically composed of one chip the size of a finger nail and a support cooling and isolation shell the size of an SUV that ensures the required “sterile” and isolated operational environment, and it costs around $ 25 million.

- Algorithms

Writing algorithms for Quantum Computers requires the ability of thinking and taking into account the laws of Quantum Mechanics, therefore not the task for a common developer.

Peter Shor, from MIT, has developed one Quantum Algorithm (the “Factoring algorithm”) that led the Intel community to the verge of a nervous breakdown by rendering most encryption keys ineffective. Basically, while the most powerful standard computer would take hundreds of years of continuous processing to get there, if tomorrow any of us would have the chance of bringing home a Quantum Computer with the Factoring Algorithm embedded in a software piece, we could break any RSA encryption in a matter of seconds, making all the bank accounts or electronic transactions that we could “look at” absolutely transparent.

Lov Kumar Grover Ph.D. at Stanford and currently working at the Bell Laboratories developed a Database Query Quantum Algorithm that bears the uniqueness of being able to get the right information over a vast unstructured database over a few seconds. Like finding a needle in a colossal haystack within a few seconds.

- Particle manipulation

The existing current Quantum Computers are technically only partial quantum, since they are able to use strings of electrons and not yet each electron individually. However, a Laboratory experiment in Australia’s South Wales University has recently been able to do so, therefore, maybe the next generation of Quantum Computers will.

Potential

All of this is something that is being developed “as we speak”.

In 2011 the development stage of Quantum Computers allowed the tremendous accomplishment of calculating in one single operation the expression 3*5=15. Yes, just that …

Now back then (in 2011), Dr. Michio Kaku, who is one of the brightest minds of our era, stated in an interview that it was not clear by when would we have the first operational and useful Quantum Computers.

Four years after, in 2015, D-Wave (a Canadian company that produces Quantum Computers), after having developed a Quantum Computer for Lockheed Martin (the company that amongst many other military assets produced the F-22 Raptor fighter jet), produced another one which resources are being shared by Google, NASA and USRA to perform calculations that normal computers (no matter how powerful they are), are not capable of accomplishing within a reasonable time frame (meaning less than 100 years working non-stop).

This last machine is being used (since 2015) for the purpose of:

- Artificial Intelligence investigation and development

- Development of new drugs

- Autonomous machine navigation

- Climate change modeling and predictions

- Traffic control optimization

- Linguistics

Building a Quantum Computer doesn’t mean a faster computer, yet a computer that is fundamentally different than a standard computer.

Doctor Dario Gil, Head of IBM Research

We are flabbergasted by the number of things standard computers are capable of solving and how fast they do it, yet there are several things they are either not capable of solving or it would take them so much time that it would bring us no benefit.

Can’t think of any?

Well, here are some:

M=p*q – If someone gives you a given number M which is the product of two unknown very large prime numbers (p and q) and asks you to find them, although there are only two prime numbers that meet the requirement this is extremely hard to accomplish and would require several sequential divisions by prime numbers until you get there. It is in fact so difficult that it is used as the basis for RSA encryption, remember from above?

By the way, the D-Wave machines are not yet at the maturity point which allows dealing with such extremely complex problems.

Highly advanced alloy leagues – molecules for when electron orbits overlap and while dealing with well-known simple elements, like Hydrogen and Oxygen it is very easy to determine the outcome of such combination H2O or water, if we use highly complex elements while attempting to create new materials, that requires tremendous computing power and trial and errors, because those molecular bonds depend on Quantum Mechanics.

The simplest example can mean 2 to the power of 80 combinations in need of being calculated to reach the solution that leads to a stable molecule, which would take years on a standard computer but just minutes in the current state of Quantum Computing capacity.

The most recent D-Wave computer was successfully used in 2016 by a joint team composed of participants from Google, Harvard University, Lawrence Berkeley National Laboratories, Tufts University, UCS Santa Barbara and University College of London to simulate a Hydrogen molecule. This opens the door for the accurate simulation of complex molecules which may result in exponentially faster achievements with much fewer expenditure achievements in the fields of medicine and new materials.

Logistics optimization – Logistic systems are some of the most complex days to day contexts that humans face which have a tremendous financial impact on the global economy. Let’s consider the example of DHL, this international corporation’s Core Business is based on getting a given physical asset from geography A to geography B within a time frame that its clients are expecting when hiring them. To accomplish that, the company has several “back to back” running services contracts with logistic operators, besides having its own fleet of planes, boats, and cars. Nevertheless, having the entire system optimized even under perfect conditions, where no strikes or natural disasters happen is hard enough because a one-minute delay at reaching a given traffic light may impact the 1-day delay in delivering the asset across the Globe. Quantum computing will allow, through data input from live monitoring sensors across the Globe, to constantly optimize routes and available cargo space, in a way that could easily represent a 600% profit increase over current operational standards or a significant price reduction towards clients, while assuring accurate and optimized delivery timings.

Predicting the future – ever watched “The Minority Report” with Tom Cruise? In the movie, although through a different process, computation was able to show what had over 90% probability to happen concerning potential crimes. Dealing with a complex scenario, the likes of an international crisis, it is “merely” a matter of computing power which can deal with an exponentially larger range of influencing co-factors that may affect the result. A standard computer would take years to reach the most probable outcome of such crisis, long after the crisis had been “naturally” solved, yet a Quantum Computer can show the top 5 most probable outcomes within a matter of minutes, therefore becoming a priceless decision support tool.

Artificial Intelligence – to begin with, let’s define Intelligence as the ability to acquire new knowledge and change one’s opinion based on such new information. Now The contribution of Quantum Computing to the potential of AI once again pertains speed and this time around “speed of thought”. How powerful would it be a “mind” that could analyze a complex scenario (like the above-mentioned logistics nightmare of a DHL alike company) and promptly decide which course of action to take and where to improve things in terms of processes by assessing that some established workflow is no longer suitable?

The problem would then be, having AIs making decisions and replacing them with new ones at a rate that humans had no time to understand the underlying motives, hence no saying in the approval/ disapproval of such strategic actions.

Safer communications – Quantum Cryptography, what is it?

We have seen that a Quantum Computer has the power to crack our state of the art current encryption pillars, but if it has the power to crack it, it has the power to create something better.

The problem of what we now can reach as methods of encrypting messages is that all of them depend on pre established keys, either unique or combinations of public and private keys and those keys are difficult to crack but only because of the methodology within reach of standard computers.

Now, Quantum Encryption cleverly exploits the initial problem of dealing with particles that behave like a wave until there is an attempt to observe them when they immediately behave like a particle.

Photons, if paired or entangled using the appropriate language, will each maintain their relative spin regardless of space or time, so four pairs of photons that transport each a status “01” conveyed by their spin, creating, therefore, a qubyte that is represented by “01010101” or any other combination for that matter, will maintain this “information” unaltered for as long as they are not “excited” and any attempt to read the code will immediately destroy it.

This bears the power of effectively creating unbreakable, full proof secure messaging.

P.S: This is a guest post published at the request of Karl Crisostomo of tenfold.com and has reference to our earlier article titled “Section 65B interpretation in the Quantum Computing Scenario”

Naavi