DGPSI is the Digital Governance and Protection Standard of India (DGPSI) which is meant to be a guidance for compliance of DPDPA 2023 along with ITA 2000 and BIS draft standard on Data Governance. It is therefore natural to reflect how does DGPSI address the use of an AI algorithm by a Data Fiduciary during the process of compliance.

Let me place some thoughts here for elaboration later.

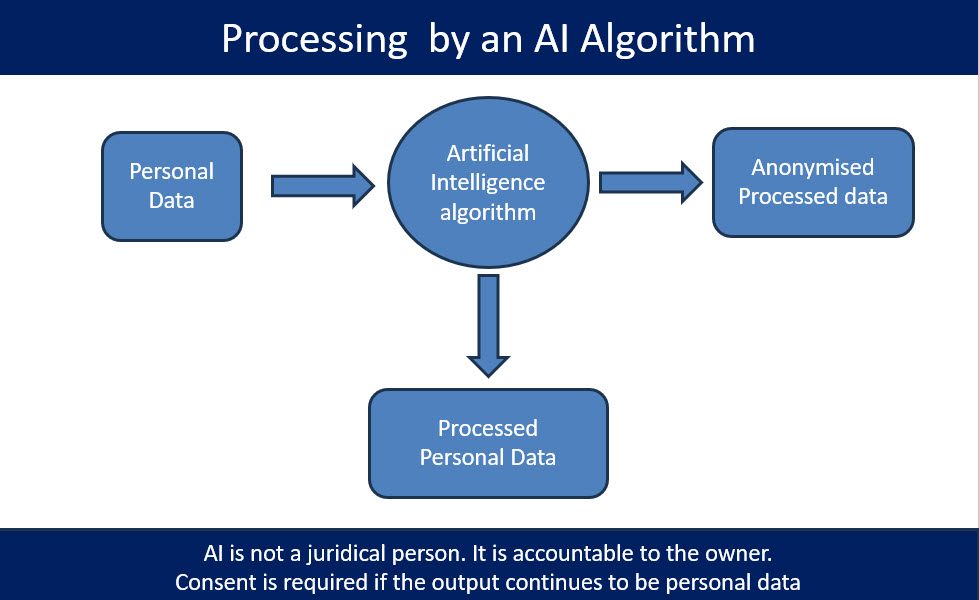

DGPSI addresses compliance in a “Process Centric” manner. In other words, the compliance is looked at at each process and the entity is looked at as an aggregation of processes. Hence each process including each of the AI algorithms used becomes a compliance subject and has to be evaluated for

- a) What Personal Data enters the system

- b) What processed data exits the system

- c) Is there an access to the data in process within the system by any human including the “Admin”.

If the algorithm is configured for a black box processing with no access to data during processing, we can say that personal data enters in a particular status and leaves it in another status. If the entry status of personal data is “Identifiable to an individual” it is personal data. If the exit status is also identifiable to an individual, then the modification from entry status to the exit status and the end use of the exit status data should be based on the consent.

If the output data is not identifiable to an individual, then it is out of scope of DPDPA. The blackbox is therefore both a data processor and a data anonymiser. Since ITA 2000 does not consider the “Software”a s a juridical entity and it’s activity is accountable to the owner of the software, and the owner of the software in this case had an access to identity at the input level and lost it at the output level, the process is similar to an “Anonymization” process. In other words the AI algorithm would have functioned as a sequence of two processes one involving the personal data modification and another as an anonymization. The anonymization can be before the personal data processing or after the personal data processing.

Under this principle, the owner of the AI is considered as a “Data Processor” and accordingly the data processing contract should include the indemnity clause. In view of the AI code being invisible to the data fiduciary, it is preferable to treat the AI processor as a “Joint Data Fiduciary”.

There is one school of thought that “Anonymization” of a “Personal Data” also requires consent. While I do not strictly subscribe to this view, if the consent includes use of “Data Processors” including “AI algorithms”, this risk is covered. In our view, just as “Encryption” may not need a special consent and can be considered as a “Legitimate Use” for enhancing the security of the data, “Anonymization” also does not require a special consent.

Hence an AI process which includes “Anonymization” with an assurance that the data under process cannot be captured and taken away by a human being, is considered as not requiring consent.

This is the current DGPSI view of AI algorithm used for personal data processing.

Open for debate.

Naavi